A tech lead pings you on Slack: "we should move our Claude integrations to MCP." You ask what they have today. Four custom tools wired via function calling, running in production for the past eight months. You ask what's broken. "Nothing, but MCP is the standard now."

Same trap as the Agent SDK. The phrase "it's the standard" hides the fact that nobody in the room has actually articulated what MCP is supposed to fix. And when you don't know what you're fixing, you migrate for nothing.

MCP doesn't replace function calling. It standardizes how your tools are exposed, not how they're called.

What MCP actually is (and isn't)

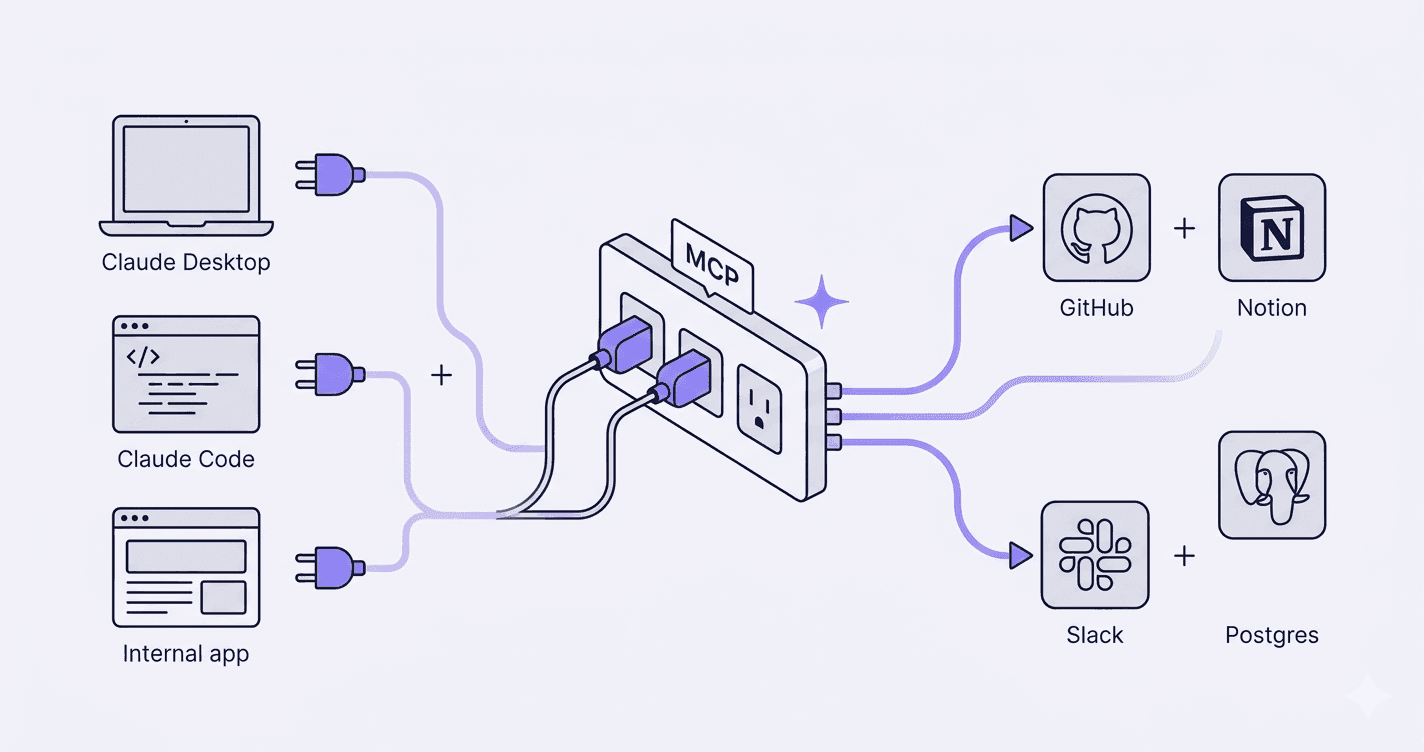

MCP, the Model Context Protocol, is an open client/server protocol for tool discovery, resource access, and prompt sharing between LLM applications and external systems. Anthropic published it, the spec is public, and it's already wired into Claude Desktop, Claude Code, Cursor, and a growing list of clients.

The problem it solves is integration math. Before MCP, you had N×M wiring: every LLM app had to write its own connector for every tool. Five clients, ten tools, fifty integrations to maintain. With MCP, you write the tool once as a server, and any MCP-compatible client can use it. M+N instead of M×N.

What MCP isn't: a new model, a function-calling replacement, or an orchestration layer. The model still calls tools the same way. The orchestration loop still belongs to your app or to the Agent SDK. MCP only handles the contract between client and tool. MCP and the Agent SDK are complementary layers, not competing ones.

MCP isn't a model feature. It's a standardized power outlet for your tools.

That framing matters. An outlet is useless if you have one device and one wall. It pays off the moment you have multiple devices that need the same power.

When MCP is worth it

Three scenarios where MCP earns its keep. Cases where standardization stops being theoretical and starts saving real engineering time.

One tool, multiple clients

Your PMs use Claude Desktop. Your devs use Claude Code. Your support team uses an internal app you built on the API. All three need to query the same internal knowledge base. Without MCP, you write three connectors, you keep three connectors in sync, and every schema change ripples through all of them.

With MCP, you write one server. The three clients hit it the same way. New client tomorrow? It plugs in for free.

Tapping into a growing ecosystem

GitHub, Notion, Slack, Postgres, Stripe, Linear, the official and community MCP server list keeps expanding. If you want Claude to read GitHub issues, the server already exists. Same for Notion pages, Slack threads, Postgres queries. You install the server, you point your client at it, you're done.

Building those connectors yourself for one client is fine. Building them, maintaining them, and tracking upstream API changes is a part-time job nobody on your team signed up for.

Decoupling tool teams from app teams

In a slightly larger setup, the team that owns the CRM is not the team that builds the LLM app. With raw function calling, the app team writes the CRM tool, which means they own the schema, the auth, the rate limiting, the error handling. Every CRM change becomes an app team ticket.

With MCP, the CRM team ships an MCP server. The app team consumes it. Clear ownership, clear contract, fewer cross-team pings.

When MCP adds nothing

Three anti-cases. MCP isn't free. It adds a transport hop, a server to deploy, a spec to follow. If you don't need it, it's overhead.

One app, two homemade tools

Your internal Claude assistant calls two functions you wrote last month. They live in the same repo, they're consumed by one app, nobody else needs them. Wrapping them in an MCP server gives you a deployment to maintain and a network call to debug for zero portability gain. Raw function calling wins.

Hyper-specific tools with no expected reuse

A one-off tool that pulls data from a legacy ERP nobody else in the world runs your way. It's not going to be reused, there's no community server coming, and your one client is your one app. The standardization argument evaporates. Ship the function, move on.

Ultra-low-latency requirements

MCP adds a transport hop. For most use cases that's invisible, milliseconds at most. For latency-sensitive paths, real-time agents, voice loops, anything where you're already fighting for tens of milliseconds, the hop matters. Direct in-process function calls win on raw speed every time.

The classic trap

Two opposite mistakes, equally common.

The first: migrating everything to MCP because "it's the standard." Single-app, single-client setups suddenly grow a server fleet, a deployment pipeline, and a new on-call rotation. The team rewrites working tools for portability nobody asked for. Months of work for zero user-visible change.

The second: refusing MCP and rolling your own protocol. Some teams reinvent tool discovery, schema definitions, and transport from scratch. They end up with an in-house framework that nobody outside the company can plug into, locked out of the ecosystem of pre-built servers, and stuck maintaining infrastructure that exists already.

A standard is the default when you expect multiple consumers. Not a religion.

Both mistakes skip the same step: defining who actually consumes the tool.

My 3 decision questions

Before adopting MCP for a new integration, three questions:

- Will my tools or data sources be consumed by more than one LLM client?

- Does an official or community MCP server already exist for what I want to wire up?

- Do I need to decouple the team building the tool from the team building the LLM app?

Two out of three yes, MCP pays off. Otherwise raw function calling wins. Less infrastructure, less surface area, less to debug.

The real question

MCP and the Agent SDK get conflated all the time. They sit on different layers. MCP is the outlet, the contract between your app and your tools. The Agent SDK is the engine, the orchestration loop that decides when to call which tool. They're complementary, not alternatives. You can use MCP without the SDK, the SDK without MCP, or both, depending on what you actually need.

The decision starts with the same question every time: who consumes this, and how complex is the loop? Get those two right, the rest is just plumbing.

If you're weighing an MCP rollout and you're not sure whether the standardization gain justifies the migration, I run that diagnostic in 30 minutes. Head to the Claude consultant page to see how I work, or jump straight to the contact form if you'd rather skip the preamble.