Every other AI pitch I read this year mentions RAG. "We're deploying a RAG." "We need a RAG." "Our competitors have a RAG." Ask the same person what problem the RAG actually solves and the answer gets fuzzy fast.

RAG isn't a strategy. It's a building block. Useful in a handful of specific contexts, useless or harmful in others. Below: 5 use cases where RAG in business genuinely pays off, 3 where deploying one is a waste, and the architecture choices that separate a useful system from an expensive chatbot nobody trusts.

What RAG actually is, in 2 paragraphs for decision-makers

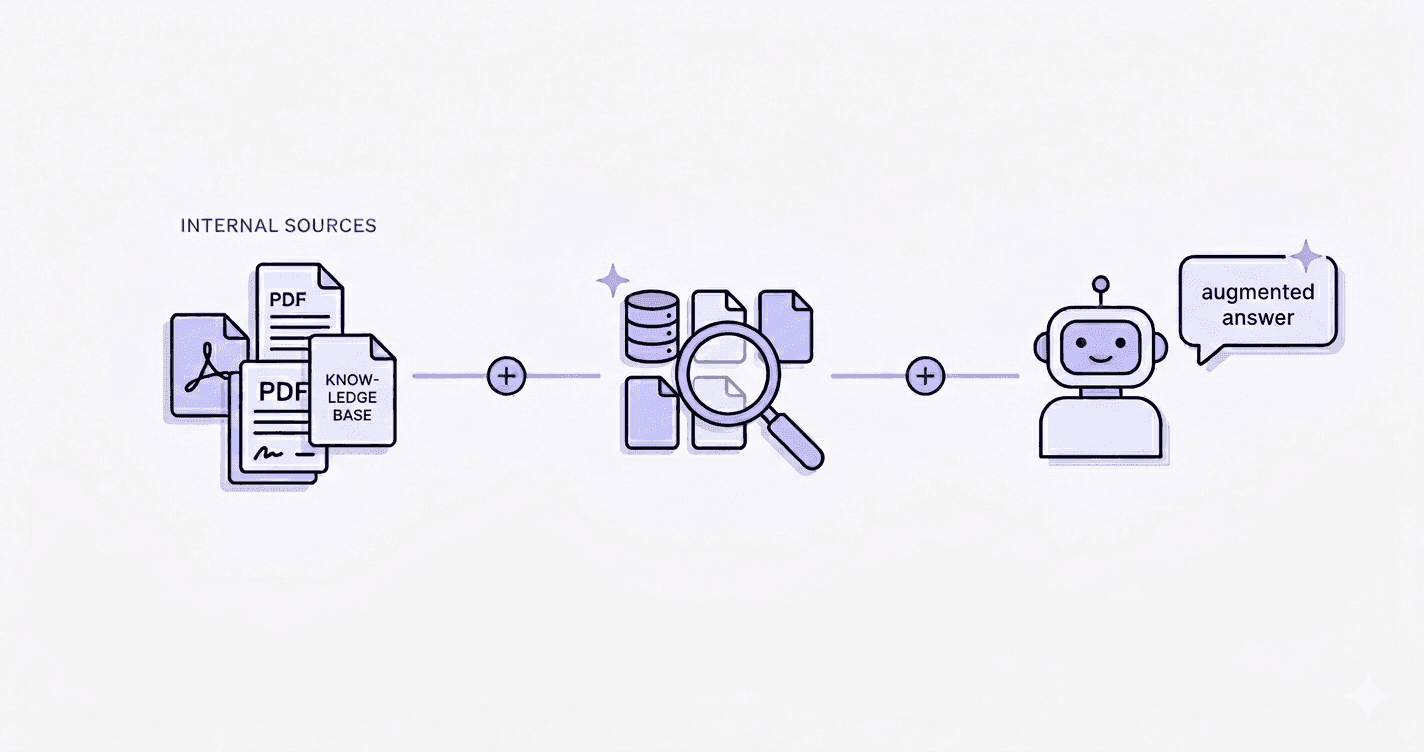

RAG stands for Retrieval-Augmented Generation. In plain terms: instead of asking a generic LLM ("answer this with what you know"), you first retrieve the right pieces of your own documents, then feed them to the LLM with the question. The model answers based on your data, not on what it picked up during training.

The difference with a standard ChatGPT chatbot: ChatGPT doesn't know your contracts, your internal procedures, your past sales proposals. A RAG built on your corpus does. It's not smarter, it's better informed. Think of it as an LLM with a librarian next to it, pulling the right files in real time before the model speaks. No technical diagram needed: search first, generate second, your documents in the loop.

Why RAG became a buzzword (and why that's a problem)

For most of 2024 and 2025, RAG got sold as the universal answer to "we want to do AI in our enterprise". Every vendor demo looked the same: clean question, beautiful answer with citations, applause in the room.

Then production hit. The team uploaded 4,000 messy PDFs. Chunking was off, half the retrievals missed the point, the LLM filled the gaps with plausible-sounding nonsense. Six months in, the tool was technically alive and operationally dead.

The pattern I see most often is "we did a RAG" syndrome. A company announces it deployed a RAG, internally and on LinkedIn. Nobody asks what business problem it solved or what KPI moved. The deployment itself is treated as the win. When the deliverable is the press release, you've shipped a buzzword, not a system.

A RAG isn't a project. It's a tool. If you can't name the metric it's supposed to move, you don't have a project, you have a demo.

5 use cases that actually work

Internal technical documentation

Who it speaks to: support and dev teams digging through Confluence, GitHub wikis, internal Notion pages.

The problem: docs are fragmented, full of outdated versions, the right answer is buried under three obsolete ones. Senior engineers get pinged on basics instead of doing real work.

RAG solution: index the technical corpus (docs, READMEs, runbooks, post-mortems), expose a chat interface in Slack or the IDE. Engineers ask in plain language, get an answer with citations.

Typical gain: 30 to 60% drop in repetitive internal questions. Easy to measure: count the "where is the doc for X" messages in Slack before and after.

HR knowledge base

Who it speaks to: HR teams swamped with the same questions about leave, expense reports, mobility policy.

The problem: the employee handbook is a 120-page PDF nobody reads. Policy changes get emailed once and forgotten. HR ends up as a human FAQ instead of focusing on hiring and people management.

RAG solution: a chatbot indexed on the handbook, internal procedures, recent HR announcements. Lives in the existing tool (Slack, Teams, intranet). Always cites the source.

Typical gain: 40 to 70% drop in level-1 tickets. The remaining ones actually need a human. HR time gets rerouted to higher-value work.

Contracts and clauses

Who it speaks to: legal, procurement, sales ops dealing with hundreds of contracts.

The problem: finding a specific clause across 500 contracts is a manual nightmare. "Which contracts have an auto-renewal clause with less than 60 days notice?" used to mean three days of paralegal work and a half-broken spreadsheet.

RAG solution: index all contracts, build a retrieval layer that handles legal language. The user asks in natural language, the system returns matching clauses with reference and paragraph.

Typical gain: what took days becomes minutes. Bigger effect: the team starts asking questions they wouldn't have asked before, because the cost was prohibitive.

Quotes and sales proposals

Who it speaks to: sales teams that rewrite the same proposals over and over, with slight variations per client.

The problem: every quote starts from a blank page or a Frankenstein copy-paste of three old ones. Inconsistent pricing, inconsistent wording, sometimes contradictory commitments across active deals. The "we already did this for someone similar" memory lives in one senior salesperson's head.

RAG solution: index the historical proposal database, tagged by industry, deal size, product line. New opportunity comes in, the system surfaces the most relevant past proposals and pre-drafts the structure.

Typical gain: turnaround cut by 40 to 60%. Juniors produce proposals close to senior-level quality on the first pass.

RFP responses

Who it speaks to: B2B companies answering dozens of RFPs per year with a deep archive of past responses.

The problem: RFP responses are 80% repetition (company info, security questionnaires, methodology, certifications) and 20% genuine customization. The 80% gets rewritten manually, badly. The team burns out on assembly work.

RAG solution: index past responses, security questionnaires, reference architecture documents. The system assembles a draft by pulling relevant sections, the team focuses on the 20% that requires real thinking.

Typical gain: time to first draft cut by half or more. Higher win rate where speed matters, and that's most RFPs.

3 contexts where RAG is useless (or worse)

Data that changes hourly

RAG lags by design. The vector index is built at a point in time. If your data changes every hour (stock levels, pricing, real-time orders, live KPIs), the RAG returns stale information. Worse, the LLM packages the staleness in fluent prose that hides it.

For data that just changed, you don't need a RAG. You need a direct query against the live system: a SQL agent, a connector to your database, an API call. Adding RAG there is adding latency and unreliability for no reason.

Low document volume

If your entire corpus is 30 PDFs and fits in a single LLM context window, you don't need a RAG. Drop the files into Claude Projects, ChatGPT Team, or NotebookLM and ask questions directly.

Modern context windows (200k tokens for Claude, 128k for GPT-4 class models) hold 300 to 500 pages of dense text. Below that volume, the retrieval layer adds engineering complexity and failure modes without adding value. Rough heuristic: under 50 documents or 500 pages, stay off-the-shelf. Above, RAG starts to earn its keep.

Answers that require calculation, not retrieval

A RAG finds passages. It doesn't compute. Ask it "what's our revenue per region for Q1, weighted by product margin" and it will find the documents that mention revenue and margin. It can't do the math. The LLM bolted on top will try, and it gets it wrong roughly half the time.

For computational questions, you need a different pattern: text-to-SQL agent, code-execution sandbox, structured-data tool. Same family of AI, different building block. RAG is the wrong tool here, the same way you wouldn't open a spreadsheet to write a contract.

Minimum architecture of a useful RAG (plain English)

Three building blocks.

1. Ingestion. Take your documents (PDFs, Word, Notion, Confluence), clean, deduplicate, split into chunks the model can handle. Boring step. 80% of project quality lives here.

2. Vectorization and storage. Each chunk gets converted into a numerical vector by an embedding model and lands in a vector database. A user's question gets vectorized too, the database returns the chunks closest in meaning.

3. Retrieval and generation. The retrieved chunks get passed to the LLM with the question. The LLM answers constrained by those chunks, ideally with citations.

Choices that actually matter, in order of impact:

- Chunking quality. Splitting a contract clause in the middle ruins retrieval. Spend time here.

- Freshness. Weekly batch is fine for HR. Real-time matters for active sales proposals.

- Access control. A sales rep should not retrieve salary data. Permissions problem disguised as a search problem.

Tools in 2026. Off-the-shelf: Claude Projects, Notion AI, NotebookLM cover most peripheral use cases without writing a line of code. Custom builds converge around LangChain or LlamaIndex for orchestration, Pinecone or Qdrant for vector storage, OpenAI or Anthropic for embeddings and generation. The components matter less than the discipline on the boring parts.

RAG belongs in the peripheral level (level 1) of a progressive AI integration approach. Start where the impact on your operational core is invisible, prove the value, then move up. Deploying a custom production-grade RAG as your first AI project is how organizations burn six months and a budget for nothing.

4 mistakes that kill a RAG project

1. No access governance. Everyone queries the same index with the same permissions. A sales rep asks a question and the system returns a chunk from an internal compensation document. One incident kills internal trust forever. Fix it upfront: every document tagged with access rights, every query inheriting the user's permissions.

2. No accuracy measurement. Most RAG projects ship without a single evaluation metric. "Does it feel good?" isn't a metric. You need a test set of representative questions with expected answers, run periodically. Without it, you're deploying blind, and the first hallucination in front of a client is your only feedback signal.

3. RAG on poorly structured data. Scanned PDFs without OCR, documents in five languages mixed together, duplicates of the same contract in three folders. The retrieval surfaces the wrong chunks, the LLM fills the gaps with confident invention. The fix isn't a better model. It's three weeks cleaning the corpus before indexing.

4. No "I don't know" fallback. Out of the box, LLMs hate admitting ignorance. Without explicit guardrails, the model invents an answer rather than saying "this isn't in the documents". Every production RAG needs a hard fallback: if retrieval returns nothing relevant, the system must say so. "I don't know" is a feature, not a flaw.

When to bring in a consultant vs going internal

Three signals say internal is enough: low document volume, non-sensitive data, no integration with operational systems. Claude Projects or Notion AI deployed by one motivated person on your team will cover 80% of the value. Save the consulting budget.

Three signals say custom is needed: thousands of documents with permission complexity, sensitive data requiring controlled deployment (on-prem, EU residency, audit trail), or integration with your CRM, ERP, ticketing system. That's where off-the-shelf won't scale and architecture choices start to matter a lot.

Most common path: start with Claude Projects to learn what your team actually queries. Use that as the spec for the custom build. The POC teaches you the questions you didn't know to ask.

If your case sits in the custom zone or you're not sure, that's what a Data and AI consulting engagement is designed to clarify before you commit to a build path.

FAQ

How much does a RAG cost for SMBs in 2026?

Off-the-shelf (Claude Projects, Notion AI, NotebookLM): the cost is the subscription, €20 to €60 per user per month. Deployed in days.

Custom light scope (single corpus, one team, no integration): €8k to €20k build, plus €200 to €1500 per month for hosting and LLM API.

Custom production (multi-corpus, permissions, integrations, governance): €25k to €60k build, plus API and infrastructure costs. Most of the upfront cost goes to data preparation and evaluation, not the LLM.

How long does a RAG deployment take?

Off-the-shelf: days. Custom light: 4 to 6 weeks, most of it in ingestion and evaluation. Custom production grade with access control and integrations: 8 to 16 weeks, governance taking longer than the engineering.

What's the difference between fine-tuning and RAG?

Fine-tuning trains the model on your data. The model "learns" your information. Expensive, slow to update, hard to undo when source data changes.

RAG leaves the model untouched and feeds it the right context at query time. Cheap to update (re-index when documents change), no training cost, easier to audit.

For 95% of business use cases, RAG is the right choice. Most companies that think they "need fine-tuning" actually need a well-built RAG.

Does my data go to OpenAI or Anthropic if I deploy a RAG?

Depends on the deployment. With default APIs, queries and retrieved chunks pass through the vendor's infrastructure. Both OpenAI and Anthropic offer business and enterprise tiers that exclude your data from training and provide audit trails. Read the contract, don't assume.

If data residency rules that out: Azure OpenAI (EU regions), self-hosted open-source models (Llama, Mistral, Qwen), or hybrid setups where embeddings stay in-house. For regulated industries (healthcare, finance, legal), self-hosting or Azure is usually the answer.

Bottom line

RAG isn't an AI strategy. It's a building block. Useful in five specific contexts (technical docs, HR, contracts, sales proposals, RFPs), useless or harmful in three others (real-time data, low volume, computational questions).

The interesting question isn't "should we deploy a RAG". It's "do we have a measurable internal knowledge retrieval problem worth solving"? If yes, RAG is probably part of the answer. If no, deploying one to look modern is the most expensive way to ship a buzzword.

If you want a 30-minute conversation to figure out whether RAG actually fits a problem you have, the contact form is right there. No slides, no pitch.